Why your brand is not showing up in ChatGPT or Perplexity answers when you rank on Google

Not showing up in ChatGPT or Perplexity? Let’s fix your AI visibility.

Book a CallYou rank on Google. Your pages get traffic. You might even hold strong positions for commercial keywords.

So why does your brand still go missing when buyers ask ChatGPT or Perplexity for recommendations?

Because Google rankings and AI visibility are not the same game.

That is the uncomfortable answer, but it is also the useful one.

Your brand is not absent from ChatGPT or Perplexity because SEO “stopped working.” It is absent because these systems use a narrower, stricter, more recommendation-oriented set of signals than Google does. They do not simply mirror SERPs. They retrieve, compress, compare, and name the sources they can confidently use in an answer. If your brand is not easy to retrieve, easy to verify, and easy to compare, you get left out.

From a Content RevOps perspective, this matters because AI visibility is not a branding side quest. It is now part of your demand capture layer. If buyers research in AI interfaces before they ever visit your site, then being omitted from those answers is not a visibility issue alone; it is a pipeline issue. Let's dive in!

Google rank does not equal AI retrieval

A lot of teams assume a strong Google position should naturally spill over into ChatGPT and Perplexity. It often does not.

Why not?

Because Google can rank a page that is broadly authoritative, link-worthy, or technically strong. AI answer engines need something stricter. They need content they can quickly parse into a recommendation. That usually means explicit facts, clean positioning, clear capabilities, and evidence that other sources recognise you in the category.

In other words, Google can reward discoverability. AI systems reward retrievability and answerability.

That gap is where most brands disappear.

This is why plenty of companies with decent SEO still do not get named in AI answers, while smaller or less dominant brands do. The winner in AI search is often not the brand with the strongest overall domain. It is the brand with the clearest machine-readable case for inclusion.

AI engines want proof, not just presence

If your site ranks, but your messaging stays vague, your pages may still fail the AI test.

Most B2B sites talk like this:

“We deliver innovative solutions for modern businesses.”

That kind of copy does not help an LLM decide whether you should appear in a shortlist.

AI systems look for explicit relevance. They need to understand, fast:

what you do

who you do it for

what specific use cases you support

what standards, certifications, integrations, or proof points you have

why you are a fit for the exact question being asked

This gets even clearer when we get down to the industry specifics.

We analysed 431 brands cited by ChatGPT across a set of 62 bottom-funnel prompts. We divided our analysis by three industry verticals: manufacturing, life sciences and EdTech. Here's what we found:

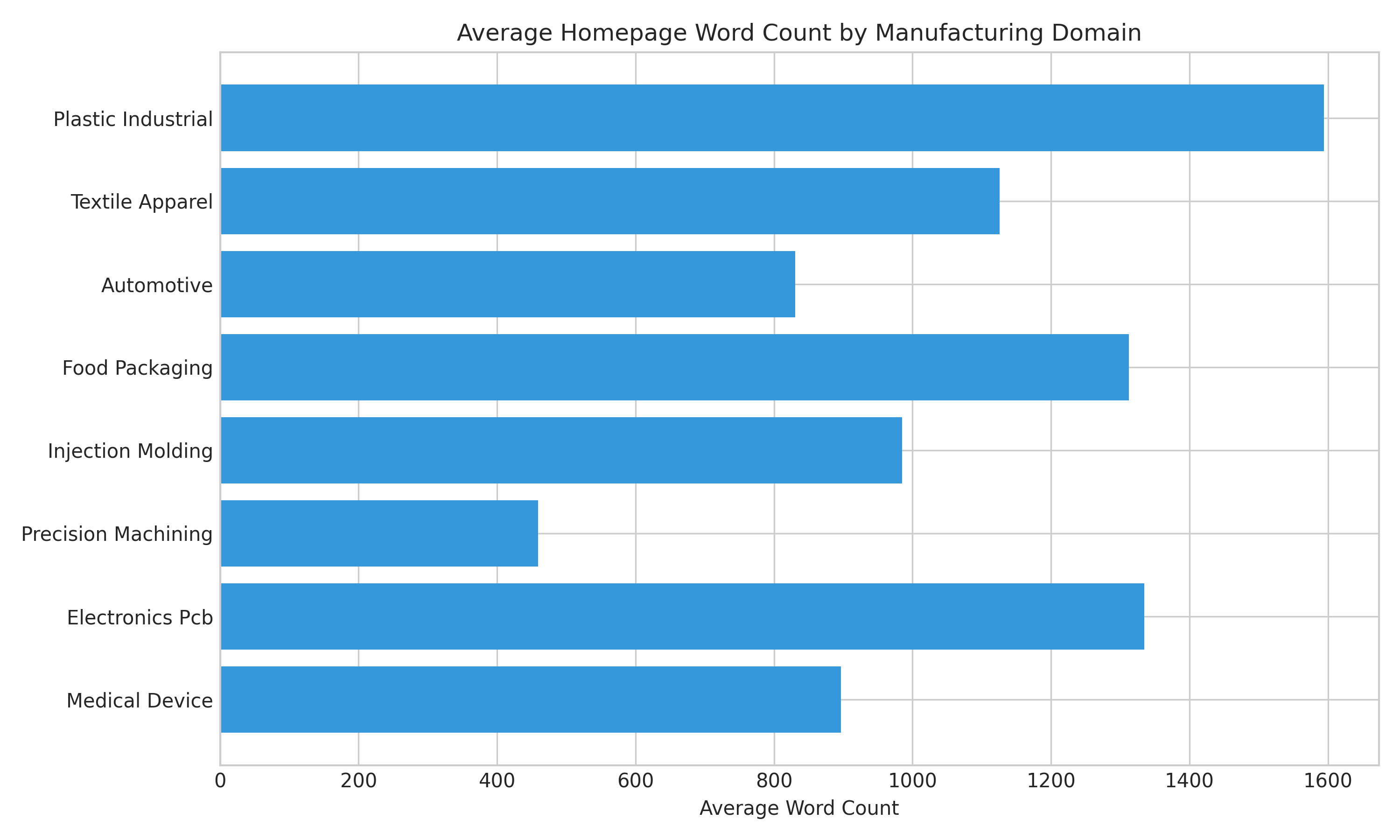

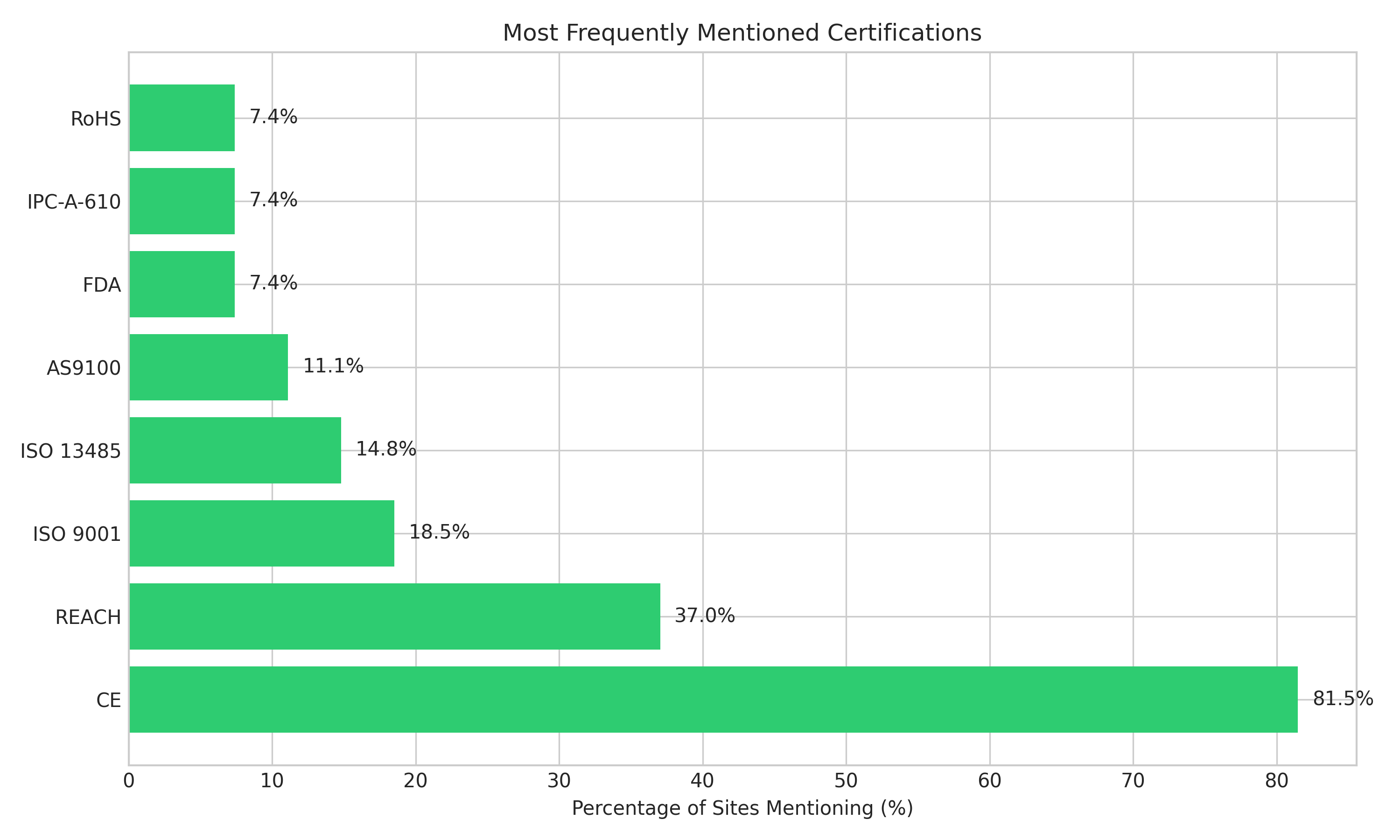

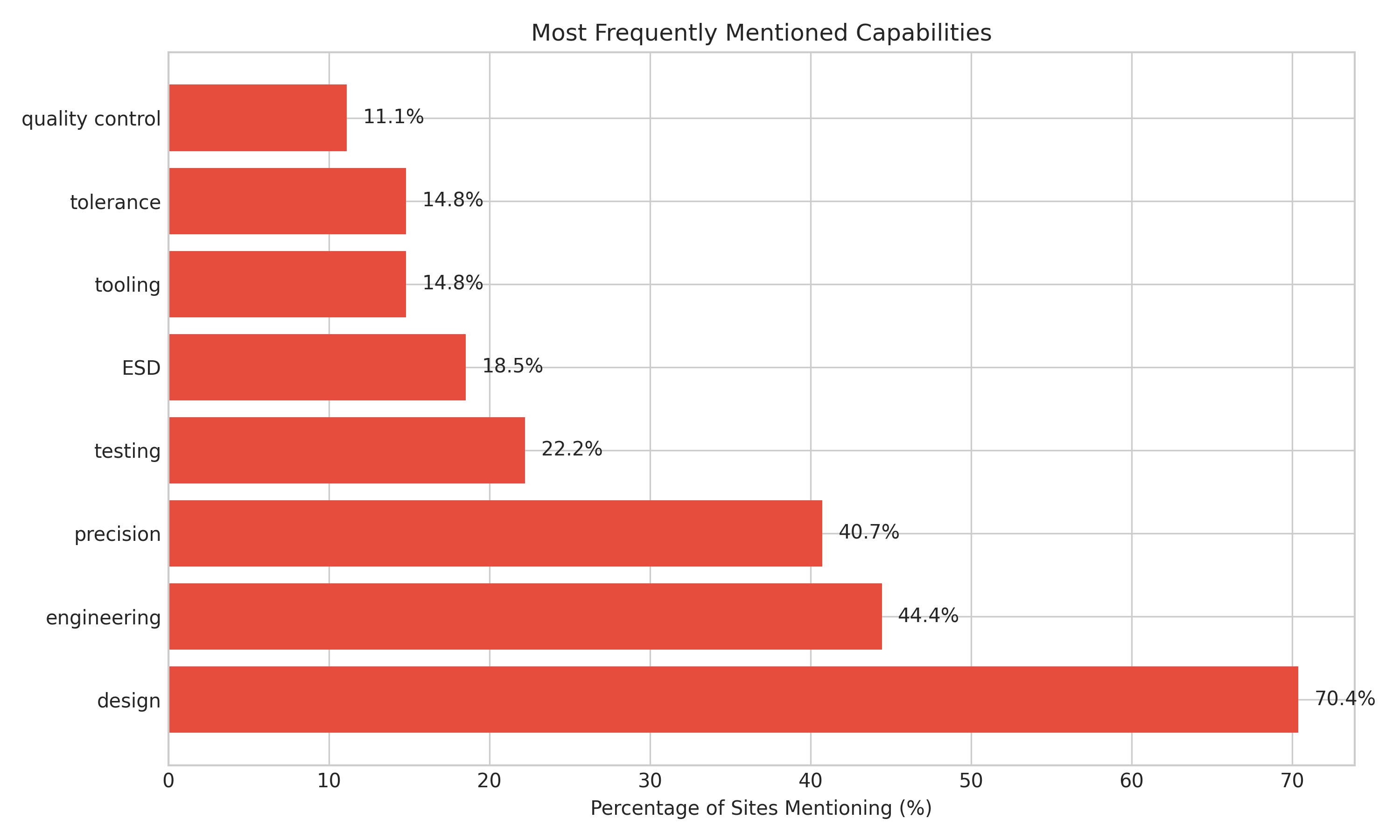

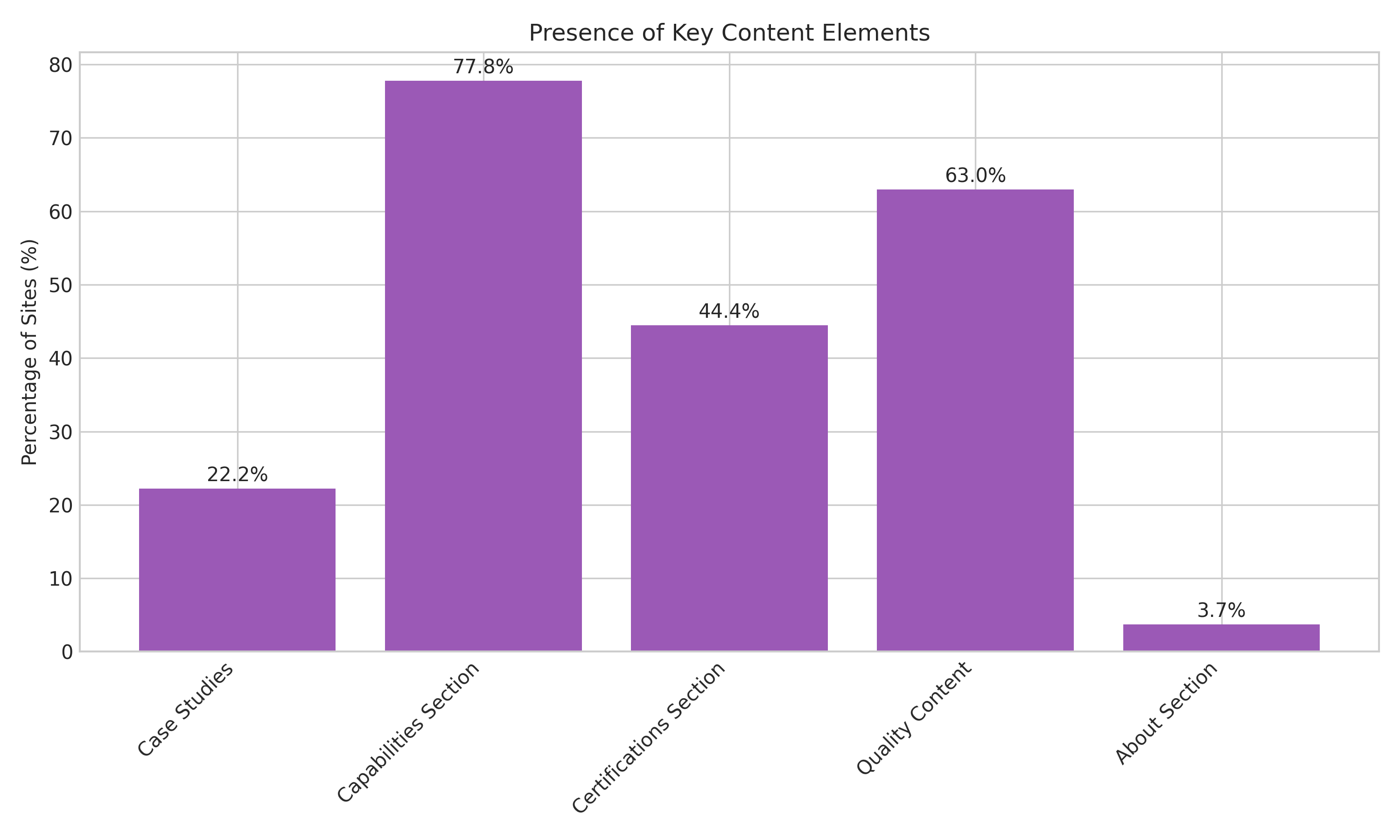

In manufacturing, brands that show up in AI recommendations tend to have higher content density, explicit capability mentions, and prominently displayed certifications in plain text, not just logos or images. Recommended sites averaged 1,131 homepage words, 1.89 certification mentions, and strong use of concrete capability language like engineering, precision, testing, and design. Dedicated capabilities and quality sections also appeared frequently.

Full report slated for release later in Q2 2026.

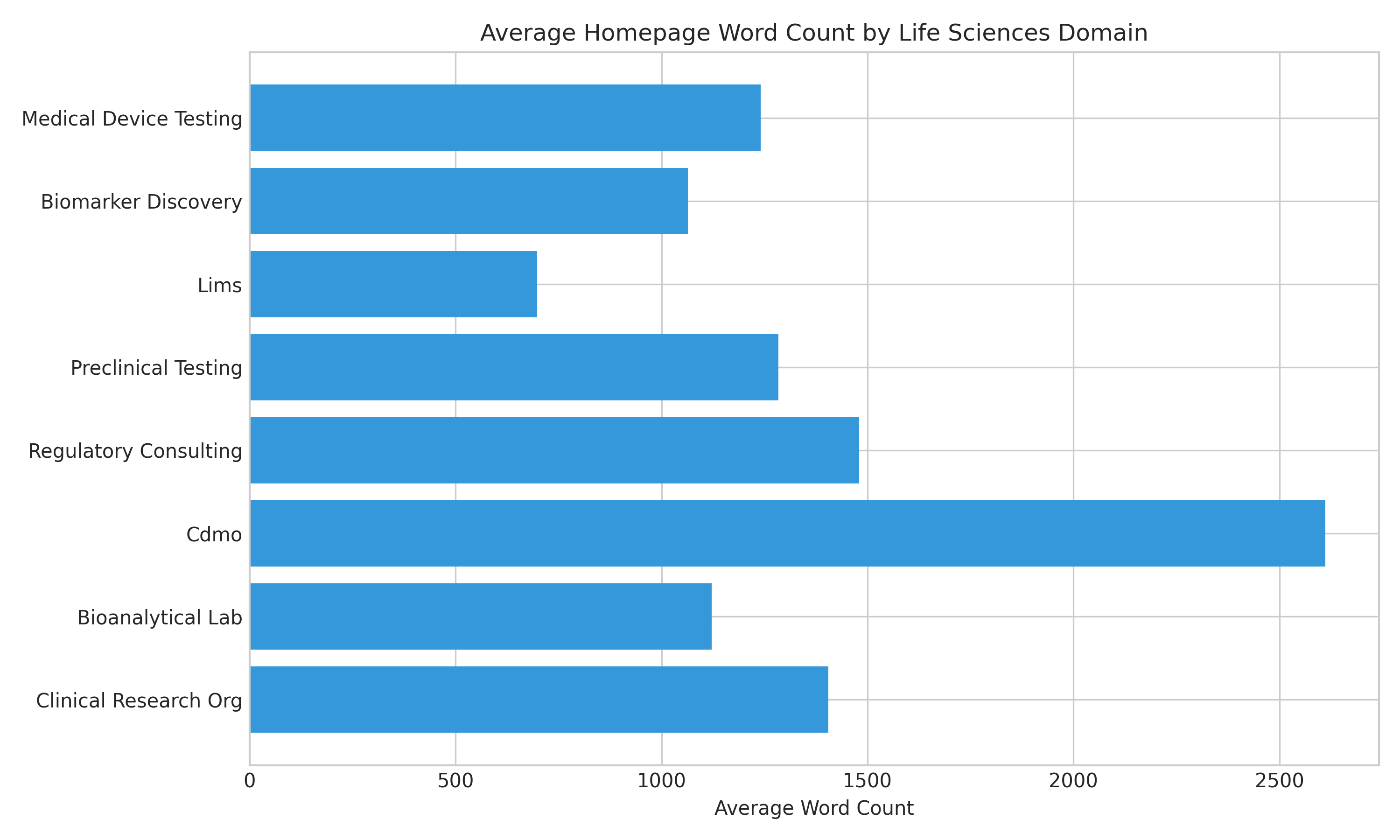

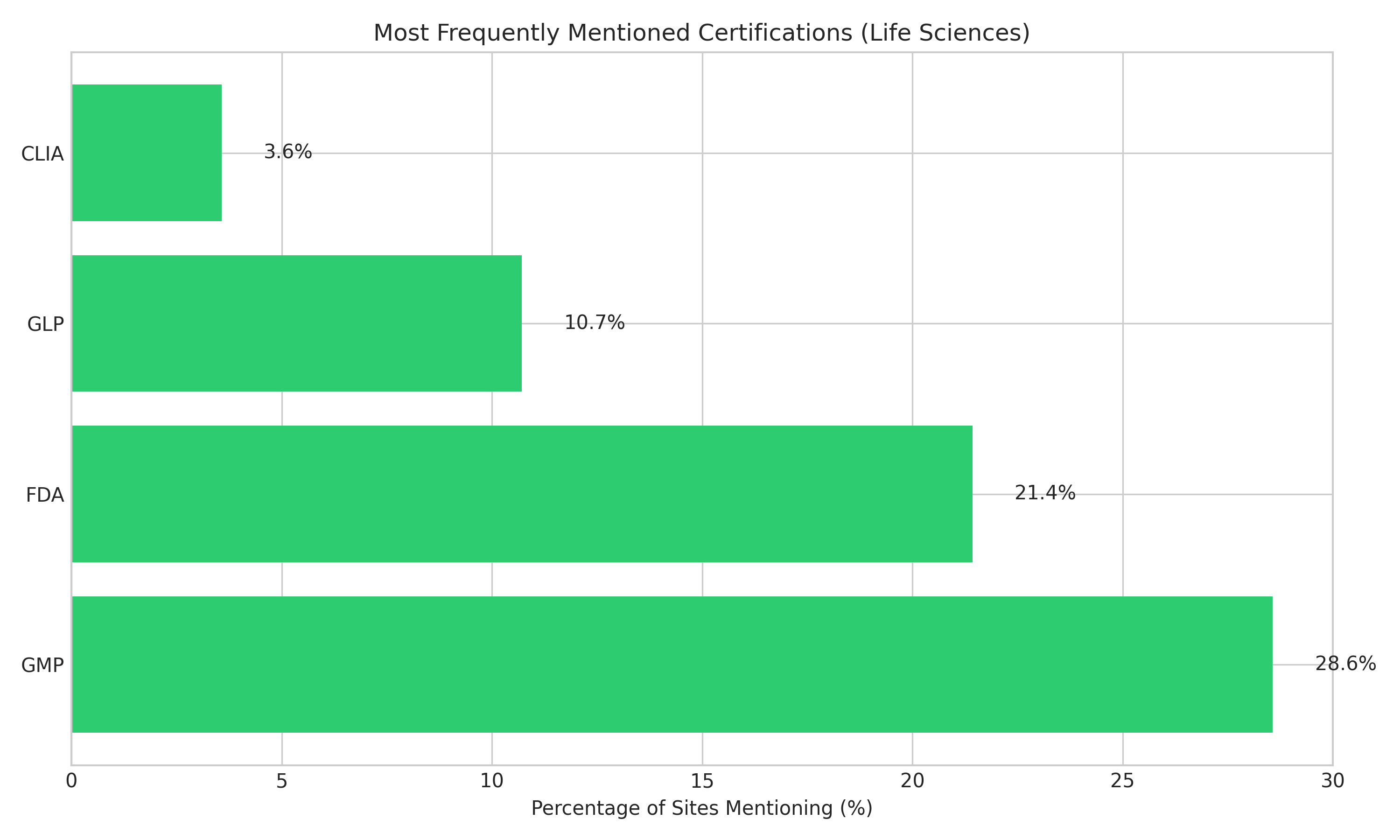

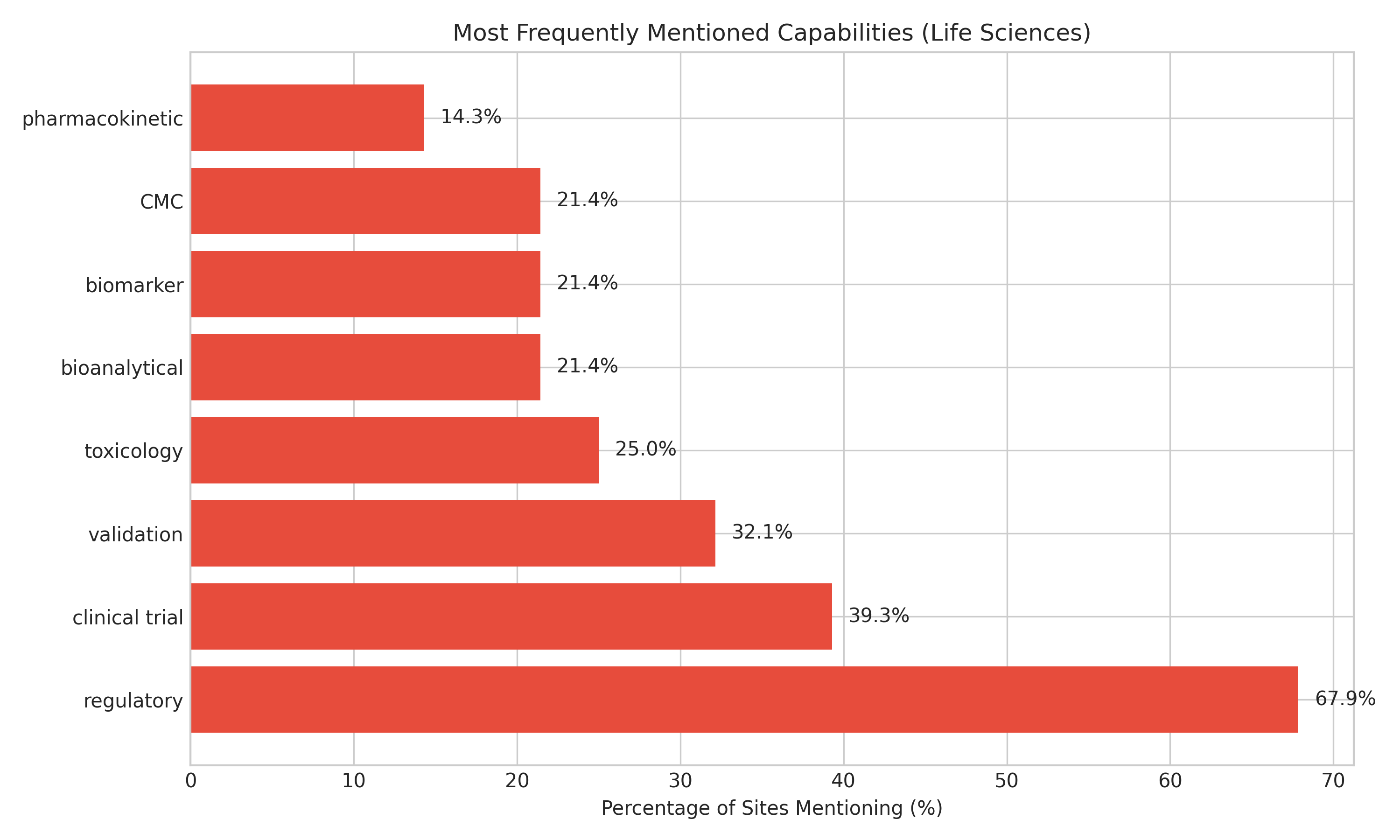

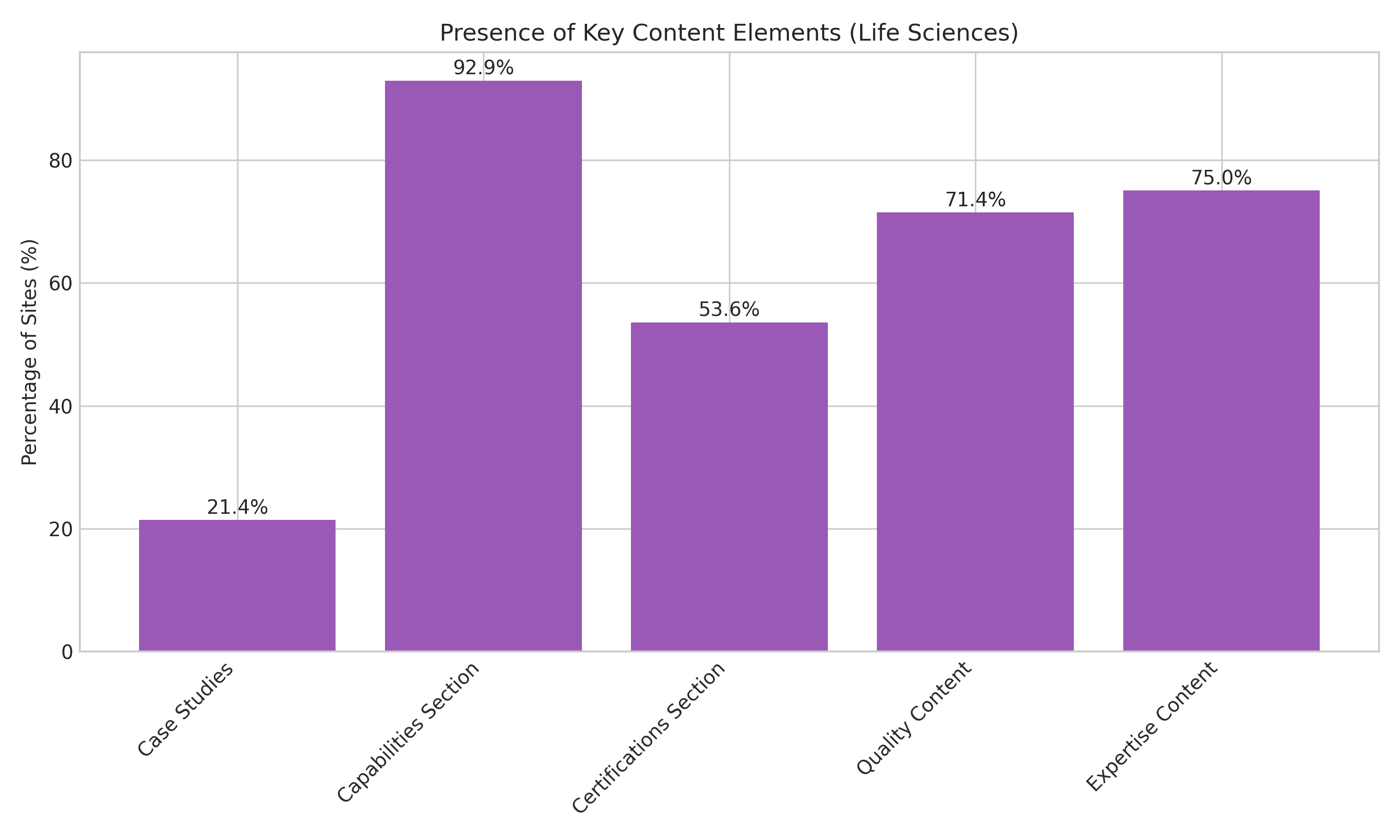

In life sciences, the pattern shifts. Certifications matter less than expertise and regulatory depth. Recommended providers averaged 1,369 homepage words, but the more important signal was explicit regulatory language. “Regulatory” appeared on 67.9% of recommended sites, far ahead of most other capability terms. Expertise content and clearly structured services mattered more than generic proof.

Full report slated for release in Q3 2026.

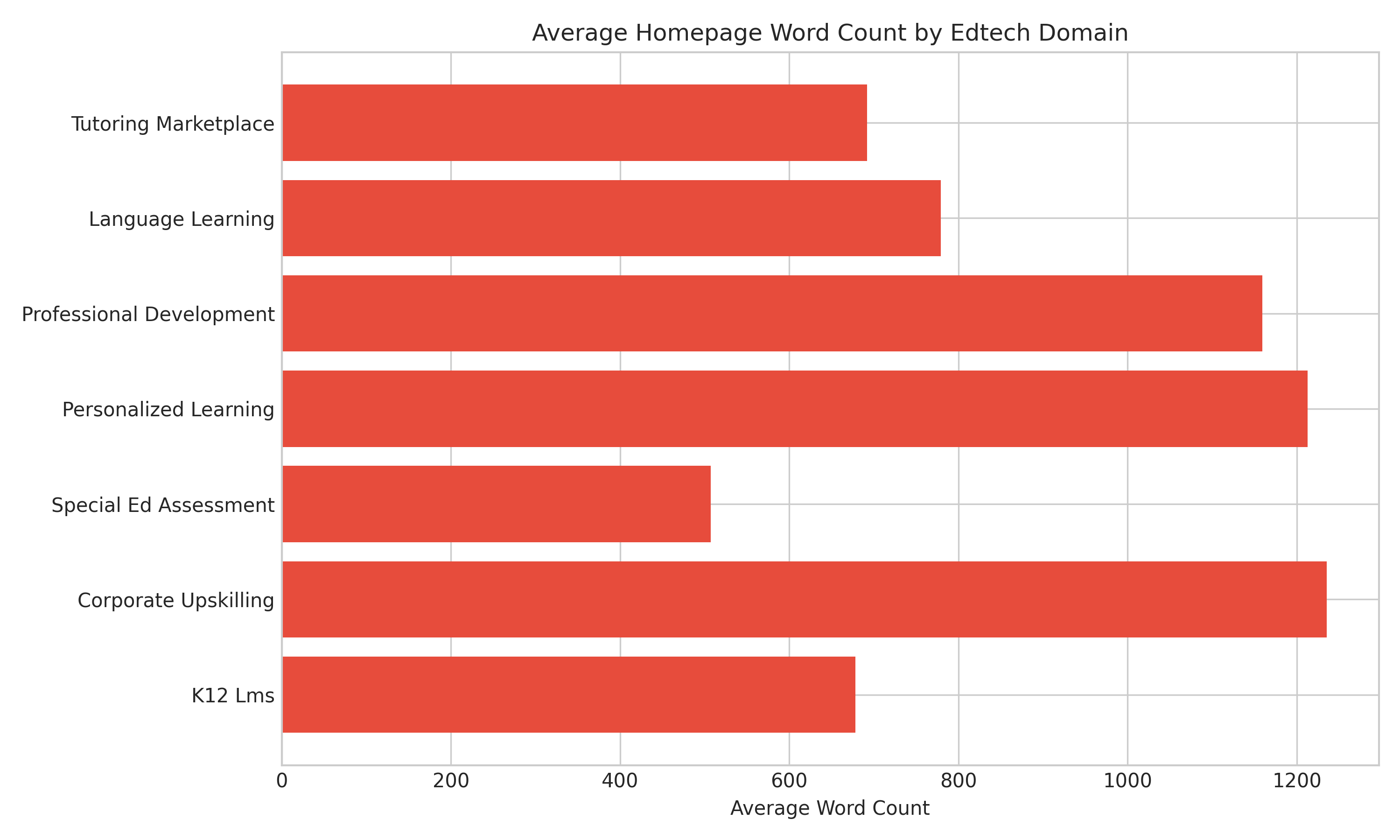

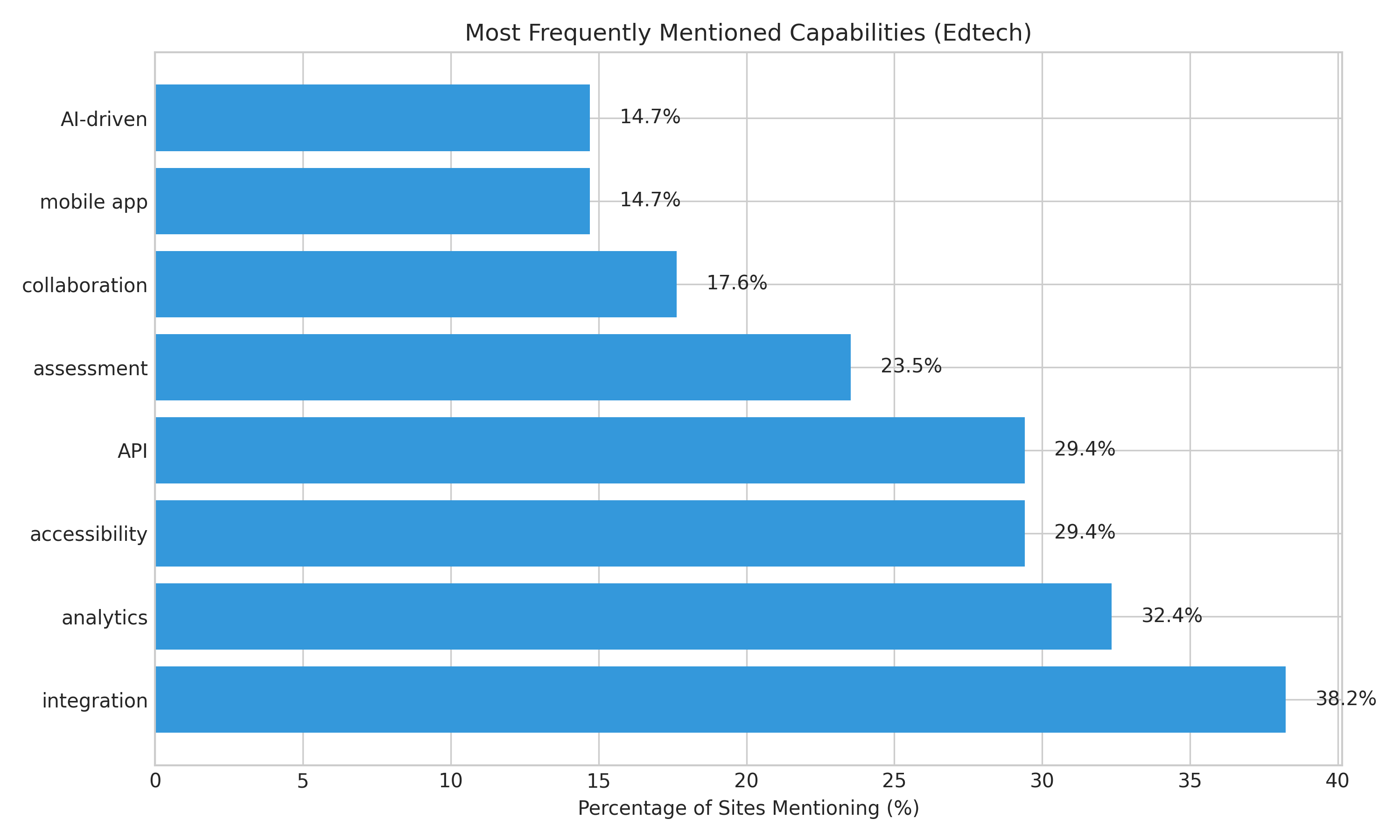

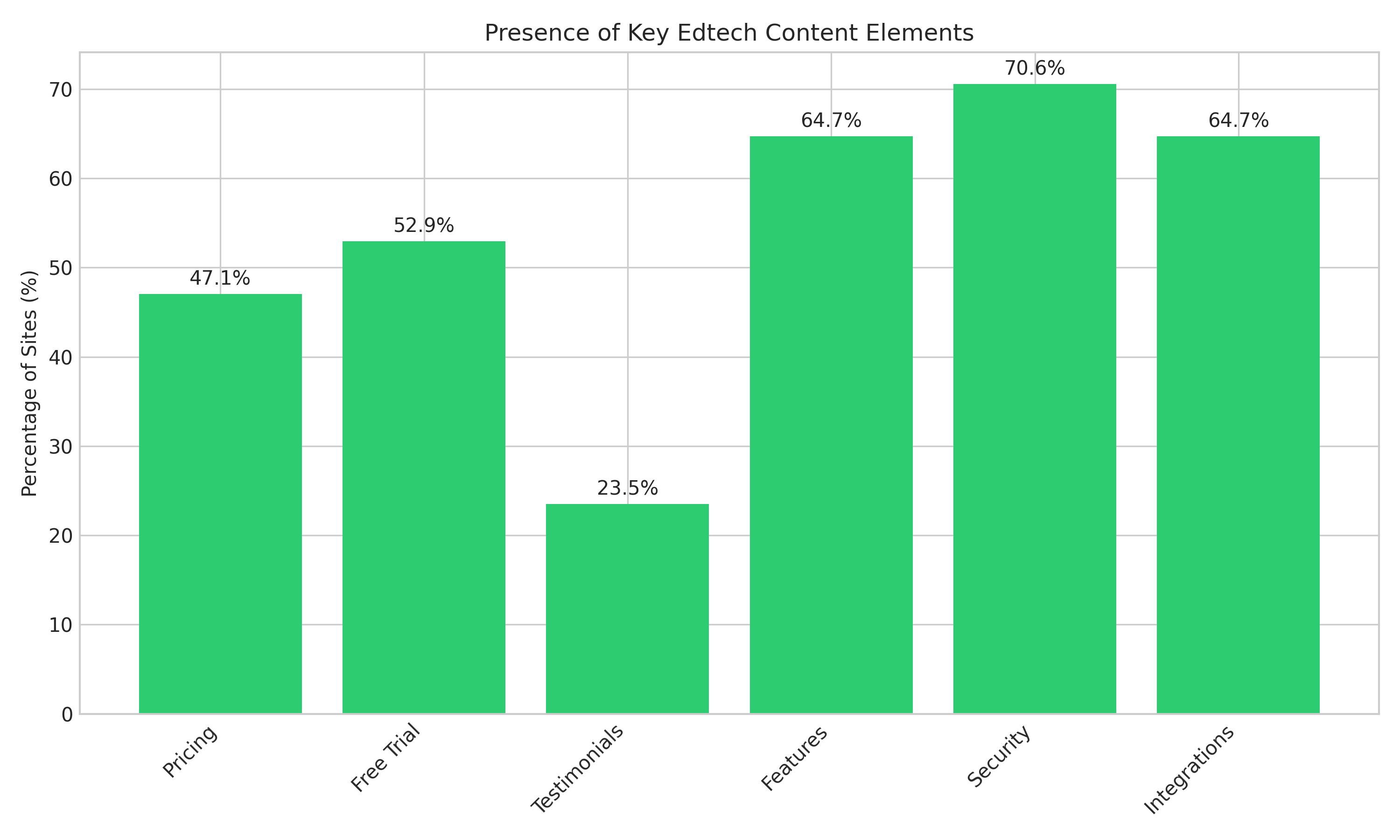

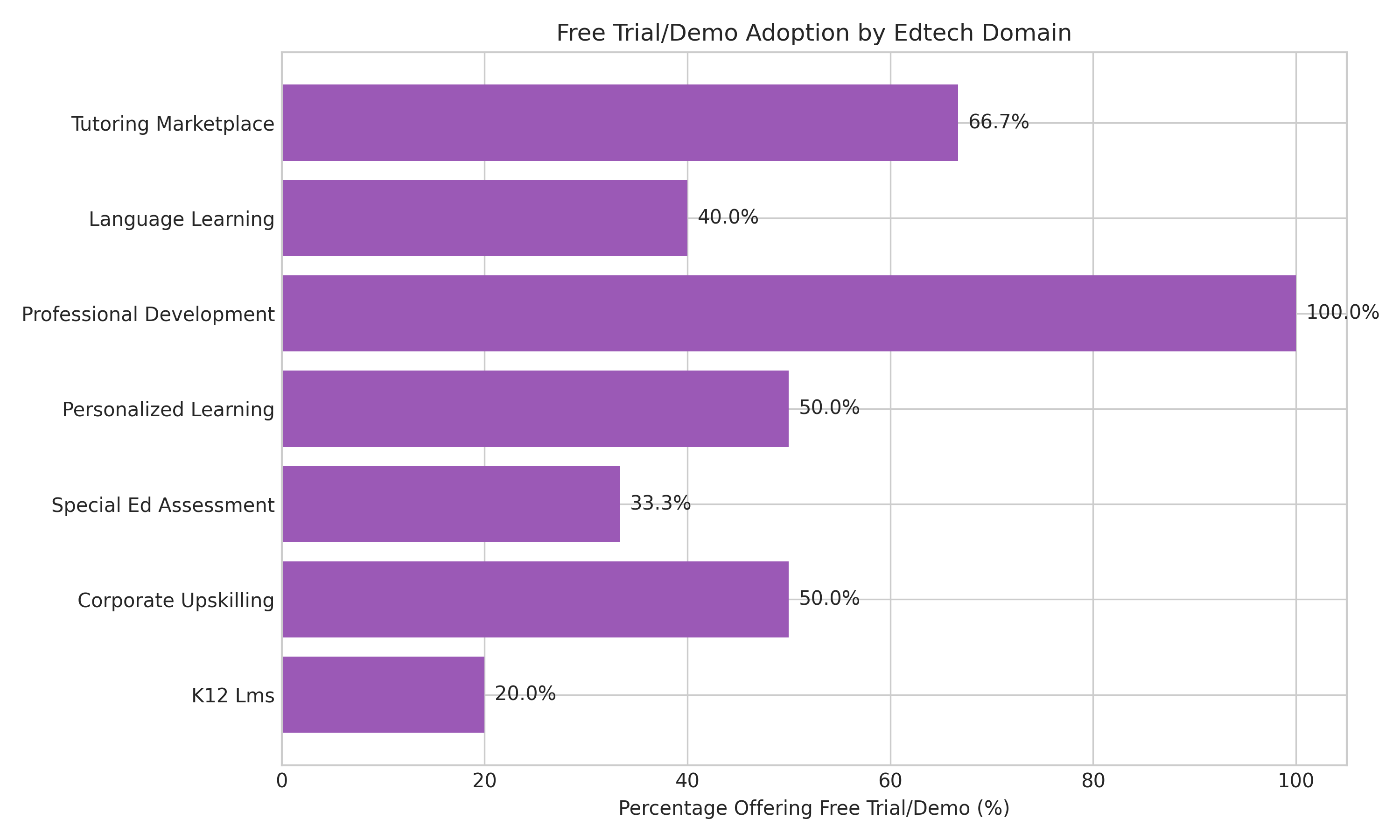

In edtech, the pattern shifts again. Long-form homepage copy matters less. Trial access, pricing transparency, integrations, and security language matter more. Recommended edtech sites averaged only 919 words, while 52.9% offered free trials and 47.1% showed pricing. Integration, API, analytics, accessibility, and privacy all emerged as key signals.

So the issue is not that AI search ignores websites. It is that it rewards websites that make themselves easy to classify and easy to trust.

Your own website is only part of the answer

This is where many teams get caught out.

You assume your highest-ranking pages should be enough. But AI answer engines often lean heavily on third-party, comparative, question-oriented content when they assemble recommendations. They want corroboration. They want external evidence. They want language that sounds like the buyer’s question, not just your brand narrative.

That means your website alone rarely carries the whole job.

If your brand is barely discussed outside your own domain, AI systems have less confidence naming you. If third-party sites do mention you, but use inconsistent language about what you do, you become harder to retrieve. If review sites, directories, podcasts, association pages, partner pages, or industry roundups do not reinforce your positioning, then your Google rankings may still be real while your AI visibility stays weak.

That is why this is not just an SEO problem. It is a content operations problem.

You do not need more content for the sake of it. You need a better content value chain, one that turns your positioning into a consistent footprint across your own site, third-party mentions, sales assets, and category-language touchpoints.

What is usually going wrong

When a brand ranks on Google but disappears in ChatGPT or Perplexity, one of five things is usually happening.

First, the site is too generic. It ranks because of domain history, backlinks, or topical breadth, but the pages do not make a precise case for why the brand belongs in a specific shortlist.

Second, the important facts are hard to parse. Certifications sit inside images. Capabilities are buried in design-heavy sections. Integrations, regulatory support, service scope, or buyer-fit signals are implied rather than stated.

Third, the brand has weak third-party reinforcement. There is little off-site evidence that supports the exact category claims the business wants AI engines to repeat.

Fourth, the site lacks question-shaped content. AI answer engines respond to buyer phrasing. If your site only speaks in brand language and never mirrors the actual commercial questions buyers ask, retrieval gets weaker.

Fifth, the company is winning in SEO but losing in content-market fit. You may rank for terms that drive traffic, while still failing to create the content assets that help buyers and AI systems understand why you are the right fit.

That last point matters most.

Ranking is not the same as recommendation readiness.

This is why GEO matters

The cleanest way to think about this is that you are now playing two visibility games.

One is classic search visibility. The other is generative visibility.

SEO helps you get found in lists of links.

GEO, or generative engine optimization, helps you get named inside answers.

Those are related, but they are not interchangeable.

Content RevOps treats this as an operational issue, not a publishing issue. The job is not to spray more blog posts into the market. The job is to build a content system that increases the odds your brand gets retrieved, trusted, and repeated across the buyer journey.

That means your content has to function as infrastructure.

It has to support:

discoverability in search

retrievability in AI systems

comparability in shortlist moments

conversion once the buyer lands

If any of those break, your pipeline leaks.

What to fix if you want your brand to appear in AI answers

Start with your core commercial pages.

Do they explicitly state your capabilities, buyer types, industry focus, proof points, integrations, compliance, or differentiators in plain text? Or are they full of abstraction?

Then look at the structure.

Do you have clear sections for capabilities, industries served, quality or compliance, use cases, and outcomes? The manufacturing and life sciences reports both point to structured, clearly labelled content as a recurring pattern among brands that AI systems recommend.

Then look at your industry-specific signals.

If you are in manufacturing, certifications and capabilities likely need to become far more explicit. If you are in life sciences, regulatory expertise and technical language likely need more prominence than generic trust signals. If you are in edtech, demo access, pricing clarity, integrations, and privacy language may matter more than adding another 1,000 words of homepage copy.

Then audit your off-site footprint.

Could a model find consistent evidence of who you are from third-party sources alone? Would industry directories, partner pages, interviews, associations, podcasts, review sites, and comparison articles all describe you in similar terms? Or would they fragment your identity?

Finally, check whether your content actually answers buyer questions.

Your site should not just say “what we do.” It should also help a model answer prompts like:

“Who are the best vendors for X?”

“Which providers support Y compliance need?”

“What tools integrate with Z?”

“What companies specialise in this exact use case?”

That is the bridge between content and retrieval.

The real answer

So why is your brand not showing up in ChatGPT or Perplexity answers when you rank on Google?

Because ranking on Google proves you can be found.

It does not prove you can be retrieved, trusted, and named inside an AI-generated recommendation.

AI systems are harsher. They want tighter signals. They want clearer facts. They want stronger third-party reinforcement. They want content that maps cleanly to buyer questions. And they reward different things in different industries.

That is the shift leaders need to understand.

This is not a reason to panic about SEO. It is a reason to stop treating AI visibility as a byproduct of SEO.

It is its own operating layer now.

And the brands that win will be the ones that treat content as a go-to-market system, not a publishing function.

Why are you missing from AI-generated shortlists?

Book a call and we’ll show you exactly why AI engines don’t retrieve your brand, and how to fix it.

About the Author

Founder & CEO, Content RevOps

Stefan Kalpachev is the founder and CEO of Content RevOps, where he helps B2B SaaS companies transform their content into predictable pipeline. With a background in content marketing and revenue operations, Stefan has developed a unique methodology that bridges the gap between content creation and revenue generation.

Connect on LinkedIn